InsideTheStack: How I Am Architecting an AI-First Startup from Scratch

No legacy systems. No inherited tech debt. No "we will add AI later." How I am designing CarYaar's architecture with AI as a core assumption from day one, not a bolt-on.

No legacy systems. No inherited tech debt. No "we will add AI later."

Just a blank repo and a decision to build differently.

I am the solo technical co-founder at CarYaar. We are building an automotive marketplace in India. Three co-founders. One engineer. That engineer is me.

Most startups inherit their architecture from whatever the first engineer knew best. Rails because they came from a Rails shop. Django because that is what the bootcamp taught. Microservices because their last company did microservices, even though they have three users.

We had a rarer opportunity. Design the entire system from zero with AI as a core architectural assumption. Not a feature to add later. Not a checkbox for the pitch deck. A foundational design choice that shapes everything from the data model to the API layer to how the product thinks about its own data.

This post is about what that decision actually looks like in practice.

What "AI-first" actually means

AI-first does not mean "use ChatGPT somewhere in the product."

It means every architectural decision assumes that AI will be part of the system from day one. Not bolted on. Not integrated later. Present in how the system is designed to store, retrieve, and act on information.

Concretely, this changes four things.

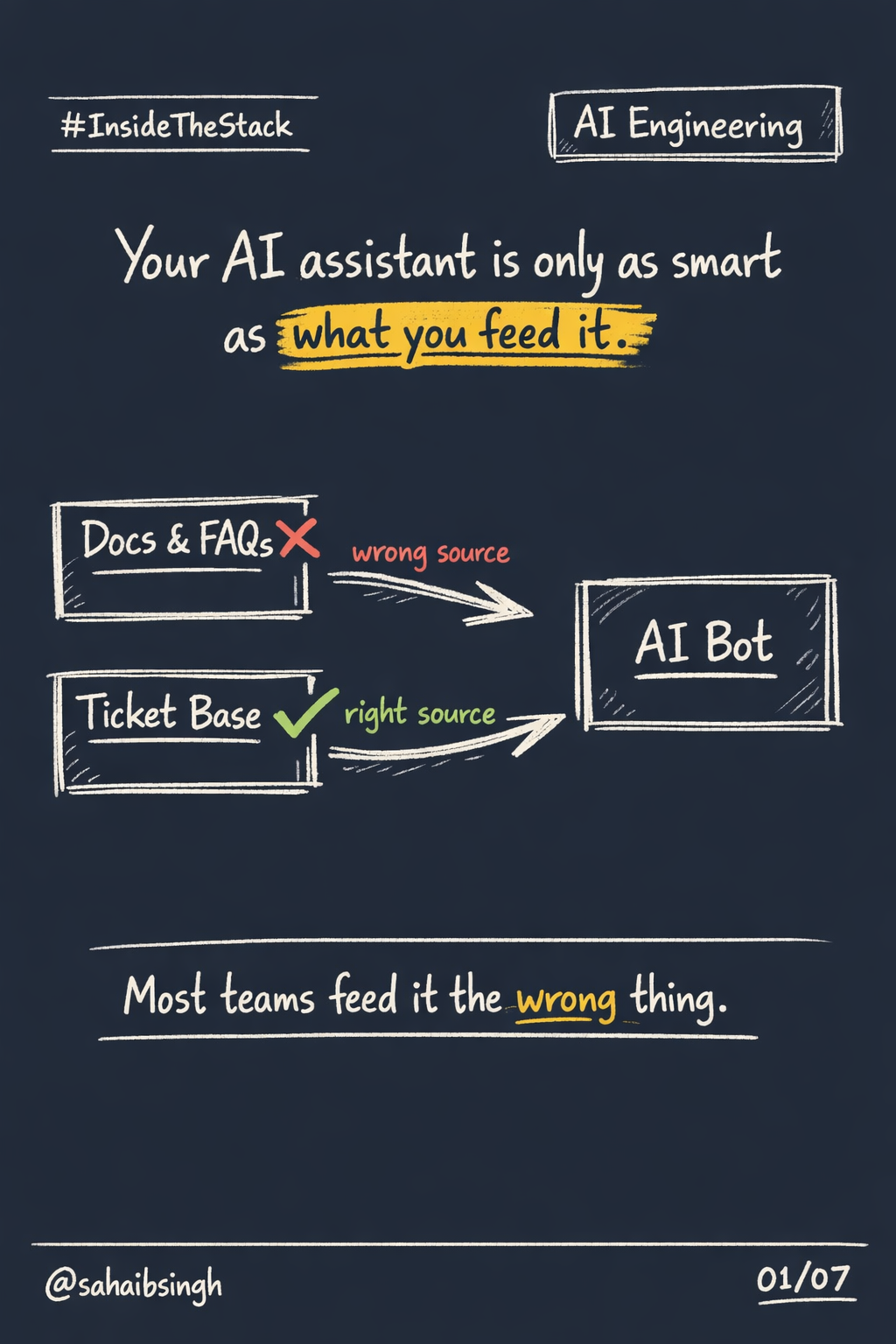

Data models are designed for retrieval, not just storage. Every record is structured so an AI system can find, understand, and act on it without complex joins or manual preprocessing. This sounds obvious. It is not how most databases are designed. Most databases are designed for human queries and dashboard reports. AI agents need data organized differently.

APIs are structured for agent consumption, not just human interfaces. If an AI agent needs to take an action in your system, can it? Most startup APIs assume a frontend developer is the consumer. Agent-callable APIs assume a machine is the consumer. The documentation requirements, the response structures, the error handling are all different.

Content pipelines assume AI will read the data. Text is not just displayed to users. It is classified, embedded, and made searchable semantically. This means building vector representations into the content pipeline from the start, not as a separate project six months later.

Search is semantic from the beginning. Not keyword-based with AI patched on top when keyword search stops working. The retrieval layer is designed to understand meaning, not just match strings.

None of this requires cutting-edge research. It requires making the decision before writing the first line of code.

The zero-legacy advantage

Most companies trying to adopt AI right now are fighting their own systems.

Their data lives in silos that were never designed to be connected. Their APIs were built for human users clicking through interfaces. Their database schemas assume a world where only people read the data. Their permission models predate the concept of an AI agent that needs access to multiple systems simultaneously.

The result is that "AI transformation" at most companies is actually just infrastructure cleanup. Consolidating data. Rebuilding APIs. Restructuring schemas. Creating retrieval layers that did not exist before. The AI part is the easy step. The plumbing is the hard part.

When you start from scratch, you skip all of that.

No ETL pipelines to retrofit. No permission models designed before AI agents existed. No schemas that assume only humans query them. No integration debt accumulated over years of "we will fix it later."

This is the advantage nobody talks about when discussing AI-first startups. It is not about having better models or smarter prompts. It is about not having to fight your own infrastructure to use them.

Building with Claude Code as the primary tool

I am building the entire MVP with Claude Code. Not as a side experiment. Not as a productivity hack. As the primary development tool for a production startup that intends to serve real users in a real market.

Here is what that looks like on a typical day.

Architecture decisions are mine. Claude does not decide how modules connect, where data boundaries sit, what gets cached, or how services communicate. These are systems thinking problems. They require understanding the business, the market, the user behavior, the scaling path. No AI tool makes these decisions well because they require context that lives in my head and in conversations with my co-founders, not in the codebase.

Execution is Claude's. Once I know what needs to be built, Claude writes it faster than I can type it. API endpoints, database models, form validation, test coverage, utility functions. The implementation work that used to take a full day now takes an hour. The code is clean. It follows the patterns I established. It handles edge cases I would have forgotten.

Review is mine again. Every pull request, every merge, every deploy goes through my judgment. I read what Claude wrote. I check the assumptions. I test the boundaries. I decide what ships and what gets rewritten.

The pattern is: think, instruct, review, ship.

Not: prompt and pray.

This distinction matters more than any benchmark. The developers who get real value from AI coding tools are the ones who bring strong opinions to the session. If you do not know what good looks like, you cannot tell when Claude gives you something that is not good enough.

What I designed for that most startups skip

When you know AI will be part of your system from day one, you design for things that most early-stage startups ignore entirely.

Structured data from day one. Not "we will clean it up when we have more resources." Every record is AI-readable the moment it enters the system. Fields are typed. Relationships are explicit. Metadata is captured at creation, not reconstructed later.

Retrieval-first schemas. The database is not just a place to store things. It is designed so an AI agent can find what it needs quickly, with the right context, without full-table scans or multi-step lookups. This affects table design, indexing strategy, and how denormalization decisions are made.

Agent-callable APIs. Every endpoint is documented and structured so that an AI system can discover it, understand what it does, know what parameters it needs, and parse the response reliably. This is different from building APIs that work when a developer reads the docs and writes a custom integration.

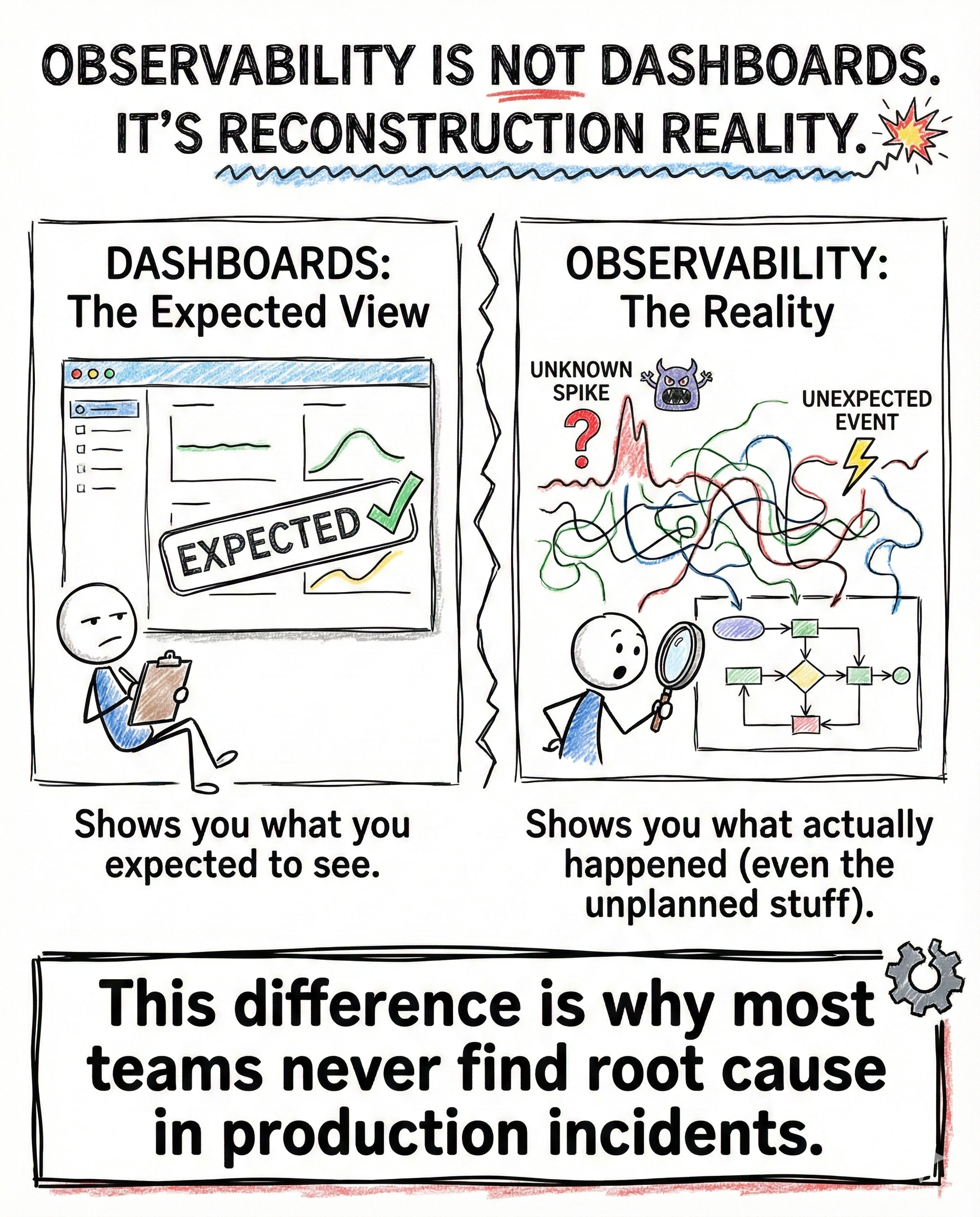

Observability baked into the architecture. If an AI agent makes a decision in the system, I need to see what it decided, why it decided that, what data it used, and what it ignored. This is not logging as an afterthought. It is logging as architecture. When an AI-driven system makes a mistake in production, you need the ability to reconstruct its reasoning. Without observability, you are debugging a black box.

What I intentionally did not build

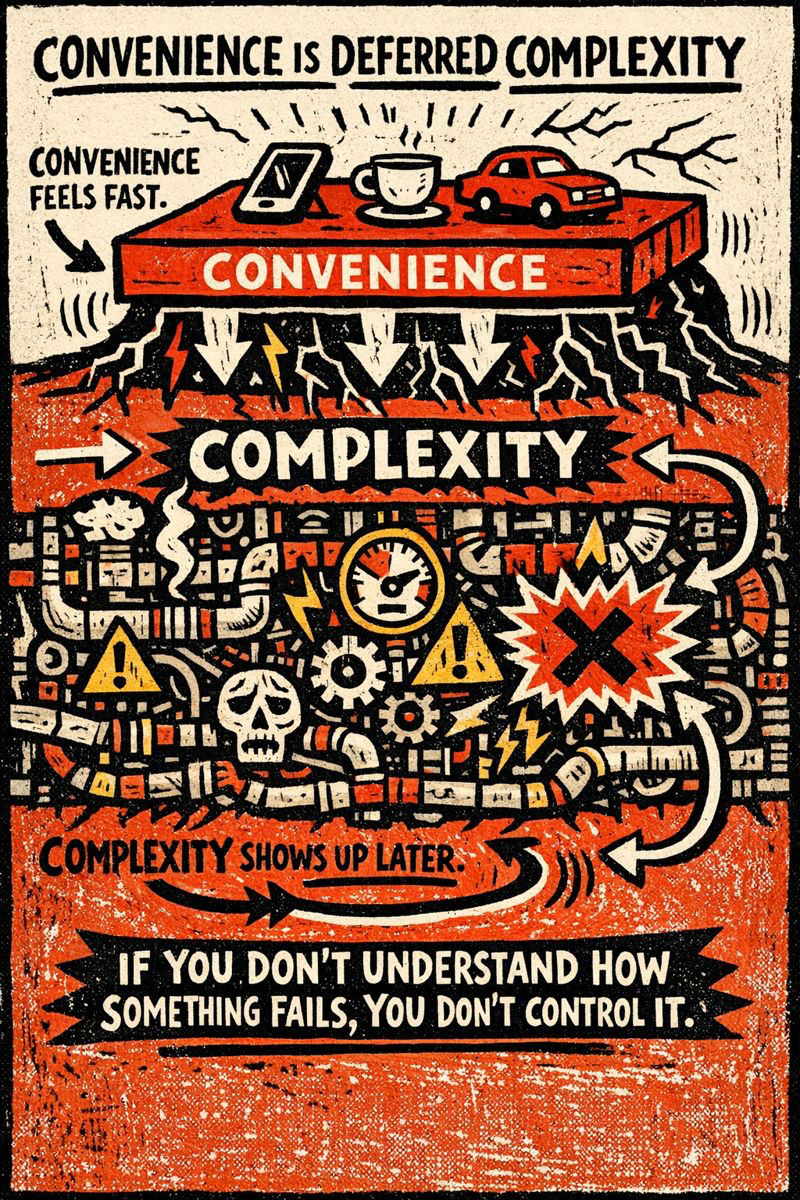

The hardest part of building AI-first is not what you add. It is what you resist adding.

No custom ML models yet. The foundation models available today are good enough for everything the first version of the product needs. Training custom models before you have product-market fit is a waste of time and money. The models will improve. Your training data will change. The product requirements will shift. Build on foundation models, customize with prompts and retrieval, and revisit custom training when you have real scale and real data that justifies it.

No complex agent orchestration. The system uses single-purpose AI tasks that each do one thing well. One task classifies. Another retrieves. Another generates. They do not coordinate autonomously. Multi-agent orchestration is a scaling problem. It is not a launch problem. Building it before you need it creates complexity you will pay for in debugging time for months.

No AI for the sake of AI. If a rule-based system solves a problem cleanly, I use the rule-based system. AI is for the problems where rules break down. Where the inputs are too varied, the patterns too subtle, the edge cases too numerous for explicit logic. Using AI where a simple conditional would work is not innovation. It is waste.

Premature AI complexity is the new premature optimization.

The Indian market reality

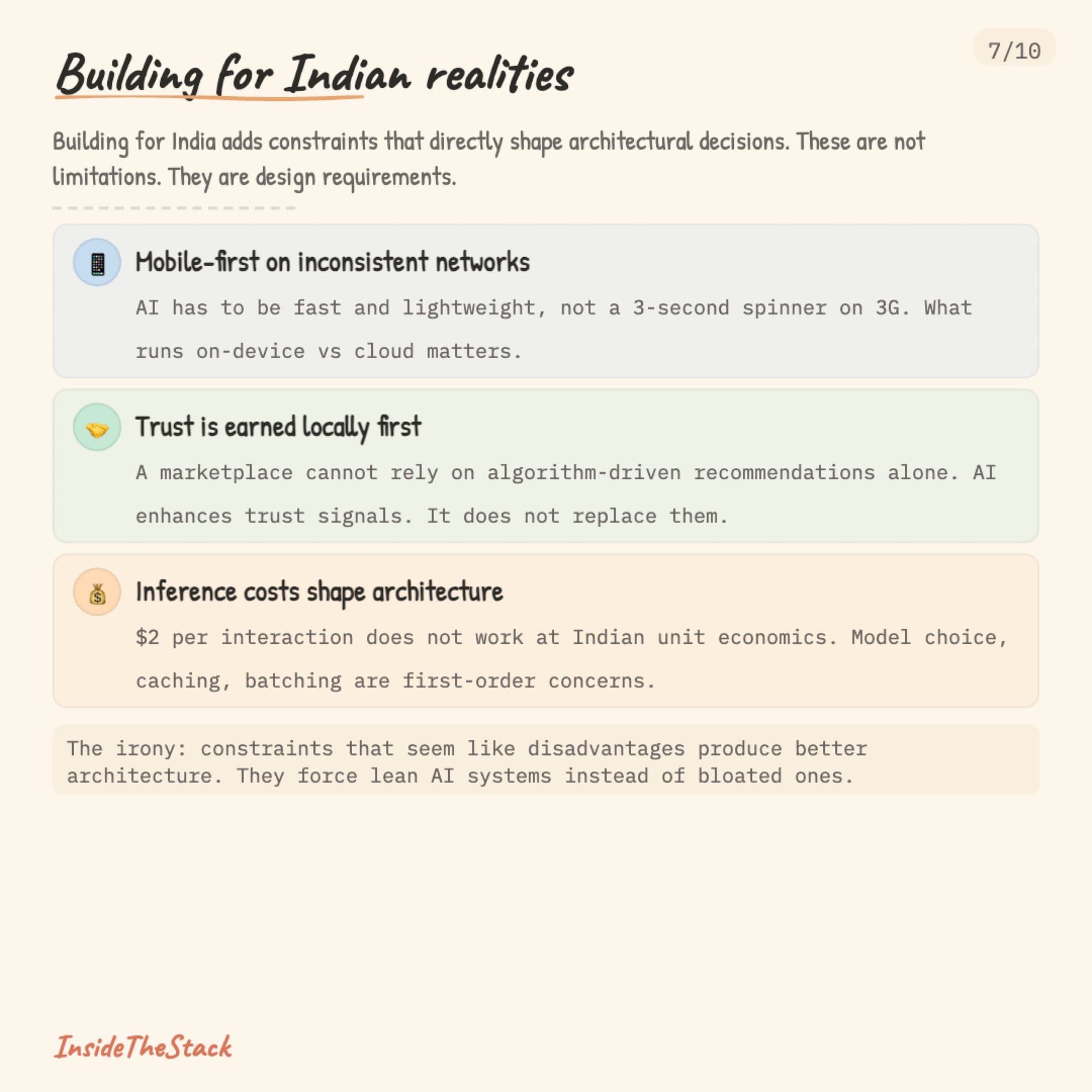

Building for India adds constraints that directly shape architectural decisions.

Users are mobile-first and often on inconsistent networks. The AI components of the system have to be fast and lightweight. A three-second spinner while a model processes a request is not acceptable when your user is on a 3G connection in a Tier 2 city. This means being deliberate about what happens on-device versus in the cloud, what gets cached, and how gracefully the system degrades when the connection drops.

Trust is earned differently in Indian marketplaces. A platform connecting car owners with service providers cannot rely on algorithm-driven recommendations alone. Trust is built locally. Through word of mouth, through verified reviews, through repeated reliable interactions. The AI has to enhance and surface the signals that build trust. It cannot replace the human judgment that creates it.

Price sensitivity is a real constraint, not a problem to be solved later. You cannot build an AI-heavy system that costs two dollars per user interaction and expect it to work at Indian unit economics. Inference costs are a first-order architectural concern. They shape which models you use, how aggressively you cache, how you batch requests, and where you draw the line between AI-driven and rule-driven features.

These constraints are not limitations. They are design requirements. And they force you to build lean, efficient AI systems instead of bloated, expensive ones. The irony is that the constraints that seem like disadvantages produce better architecture.

The real job of a solo technical co-founder

People ask what I do all day.

The answer is mostly not coding.

My time breaks down roughly like this:

40% architecture and system design decisions. Which data model supports the next three features, not just the current one. Where the API boundaries sit. How services will communicate when we scale. What to build now versus what to defer.

25% reviewing and testing what Claude Code produces. Reading the code. Checking the assumptions. Running edge cases. Verifying that what was built matches what was intended. This is where the real quality control happens.

20% product thinking, data modeling, and API design. Working with Maxson and Joel on what the product needs to do. Translating business requirements into technical specifications. Designing the data structures that everything else depends on.

15% actual manual coding. The parts that require judgment calls Claude cannot make. Complex integrations. Security-sensitive logic. The glue code that connects systems in ways that need human understanding of the broader context.

Claude Code did not make me a faster coder. It made me a full-time architect who happens to also ship production code every day.

That is the real shift for technical co-founders building with AI tools. Not speed. Leverage. The ability to operate at the altitude of system design while still being the person who pushes to production.

The takeaway

AI-first is not a feature you add to a product.

It is an architectural decision that shapes how the product stores, retrieves, and reasons about its own data.

The best time to make that decision is before you write your first line of code.

The second best time is to accept the retrofit cost and start now.

But if you have the rare luxury of a blank repo and no legacy constraints, do not waste it by building the same architecture everyone else built five years ago and hoping AI will fit into it later.

It will not.

InsideTheStack: how real systems actually work.