Local LLM Playbook

Run strong models on your machine without a GPU

For a long time, local LLMs were treated like toys.

Slow, limited, and mostly for hobbyists.

That narrative is outdated.

Local models are not the future.

They are already here, and they are good enough for real work.

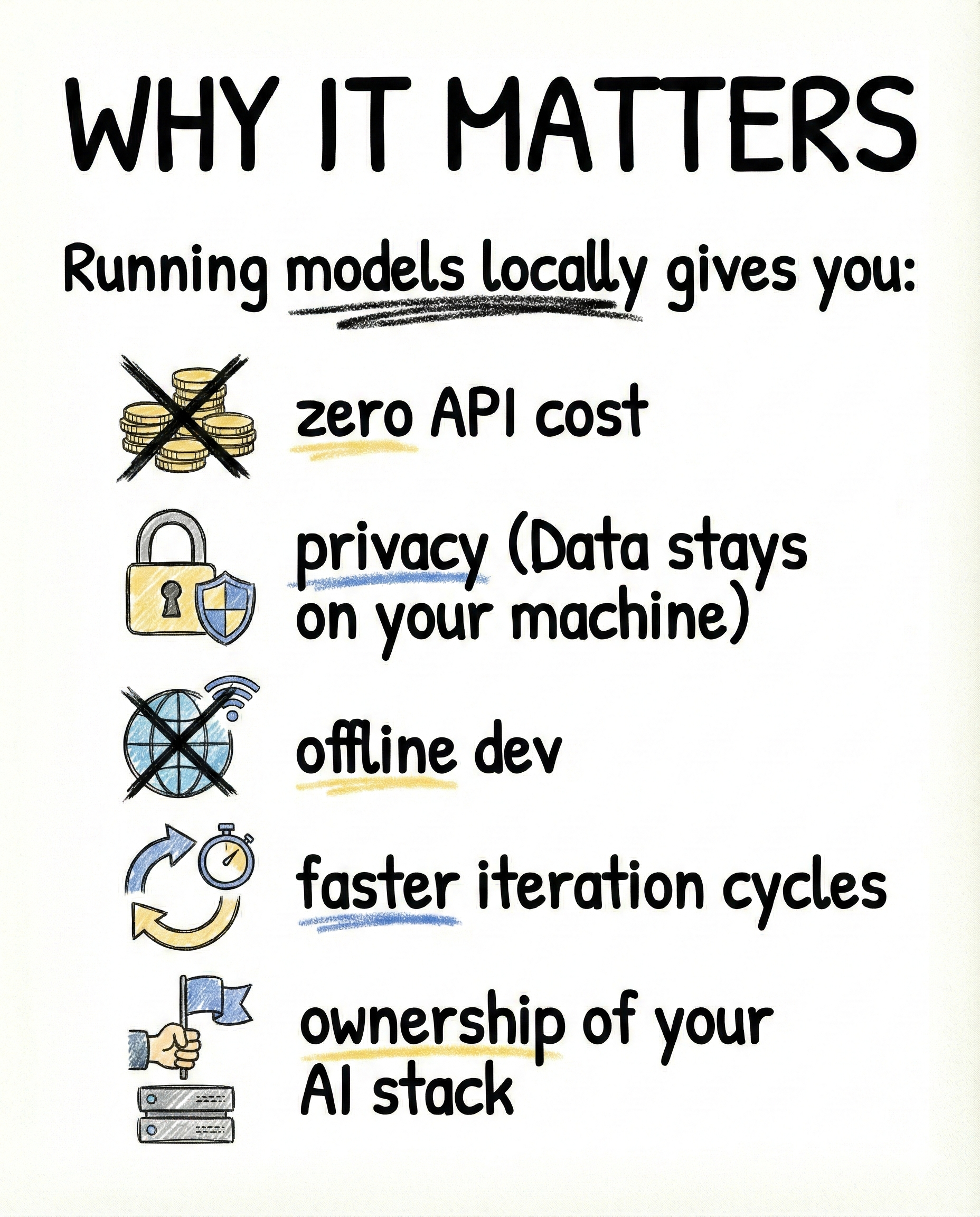

Why running models locally matters

When you run LLMs on your own machine, you unlock things cloud APIs cannot give you:

- zero per-token cost

- full data privacy

- offline development

- tighter feedback loops

- real ownership of your AI stack

This changes how you build. You stop optimizing prompts for cost and start optimizing for clarity and speed.

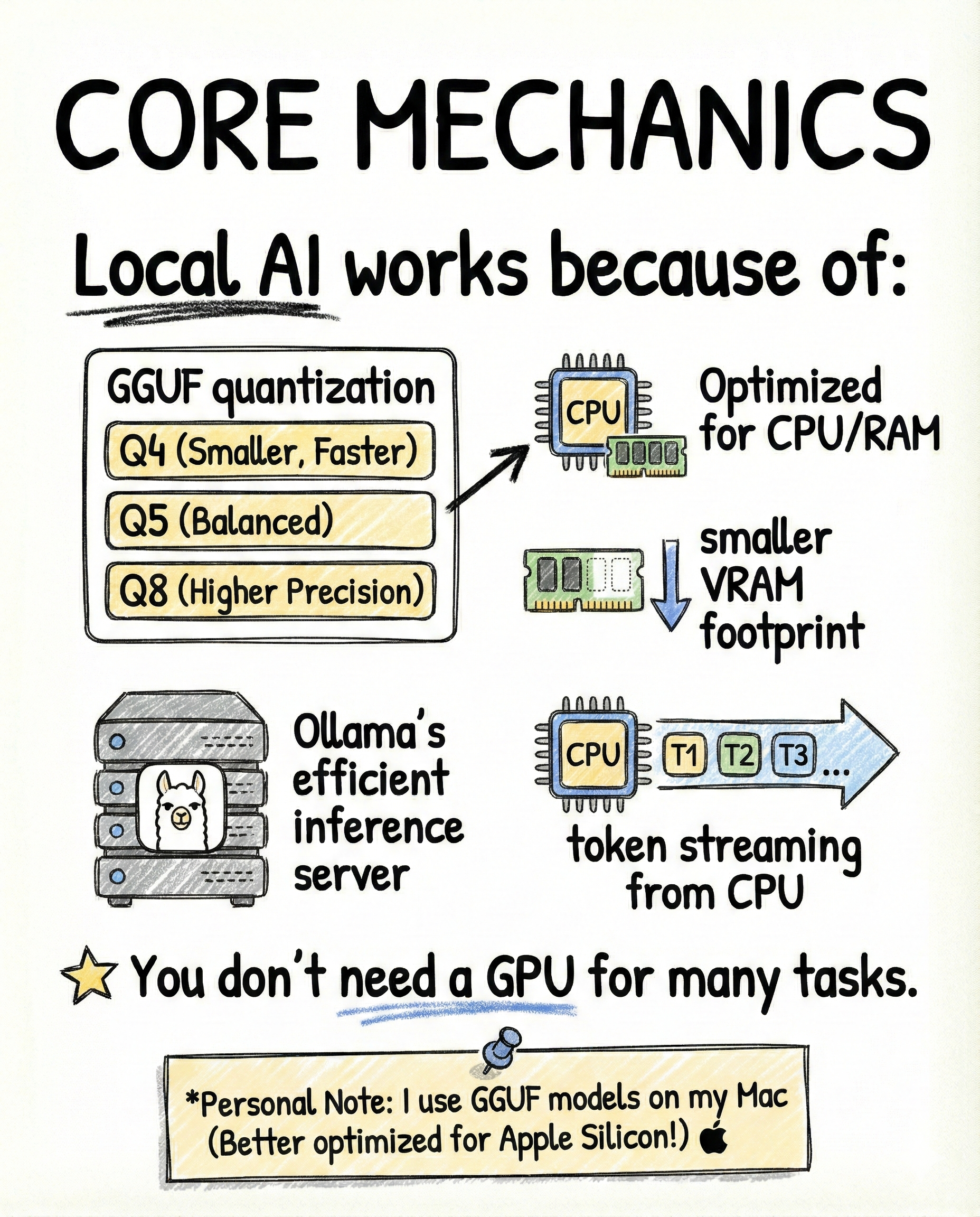

Why local LLMs actually work

Local AI works because of a few non-obvious engineering choices:

- GGUF quantization (Q4, Q5, Q8) that compresses models aggressively

- drastically smaller memory and VRAM footprints

- efficient CPU-first inference pipelines

- token streaming that feels responsive even without a GPU

Most everyday tasks do not need FP16 precision or massive GPUs.

They need “good enough” intelligence, fast.

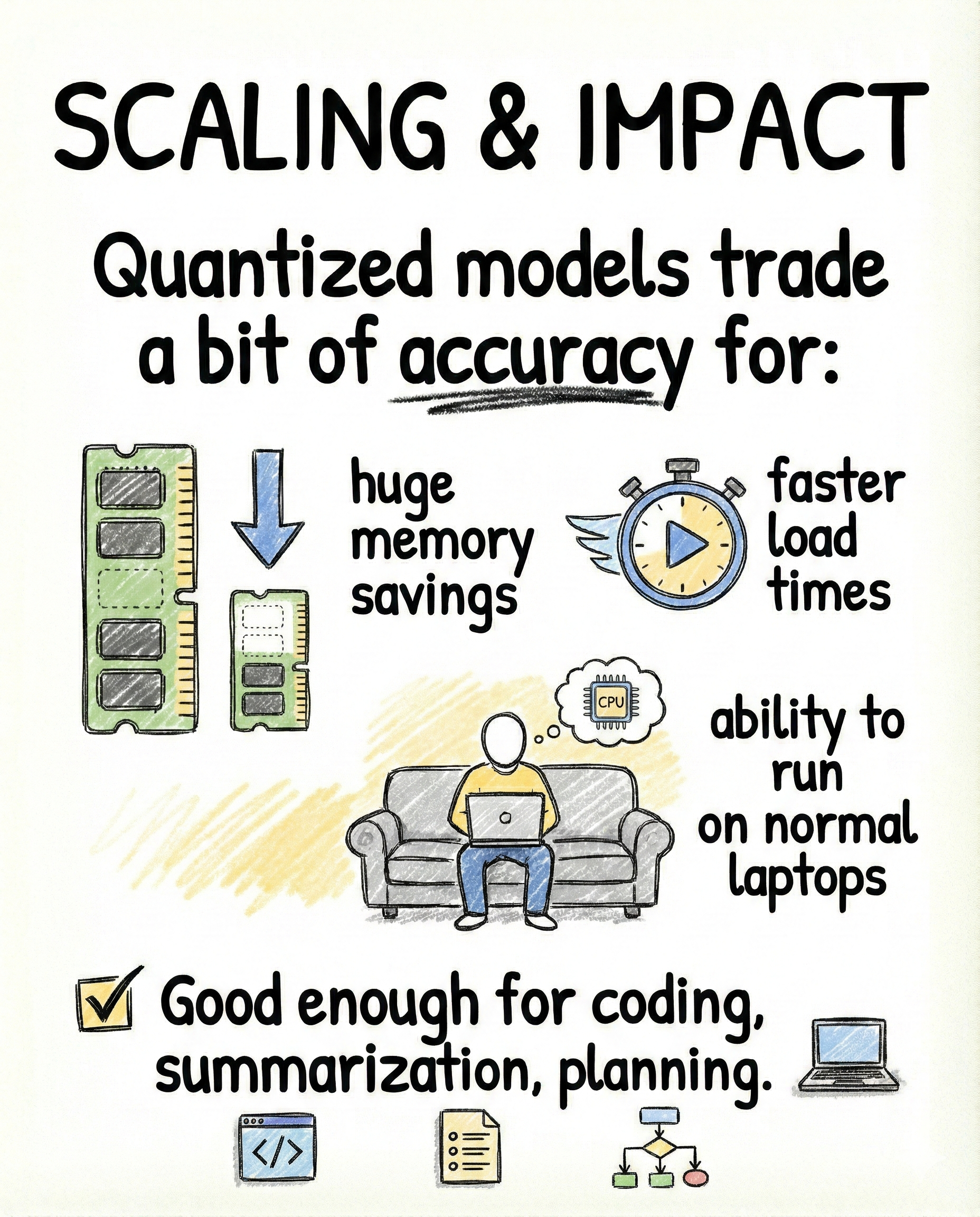

Quantization is the real enabler

Quantized models intentionally trade a bit of accuracy for massive gains:

- huge memory savings

- faster model load times

- ability to run on normal laptops

- predictable performance

For tasks like coding assistance, summarization, planning, and data generation, this tradeoff is more than acceptable.

In practice, the difference is barely noticeable.

The usability difference is massive.

Scaling impact in the real world

Quantization and local inference make it possible to:

- spin up multiple models without cost anxiety

- test prompts instantly

- run batch jobs overnight

- prototype without rate limits

This is why local models have quietly become a default tool for serious builders.

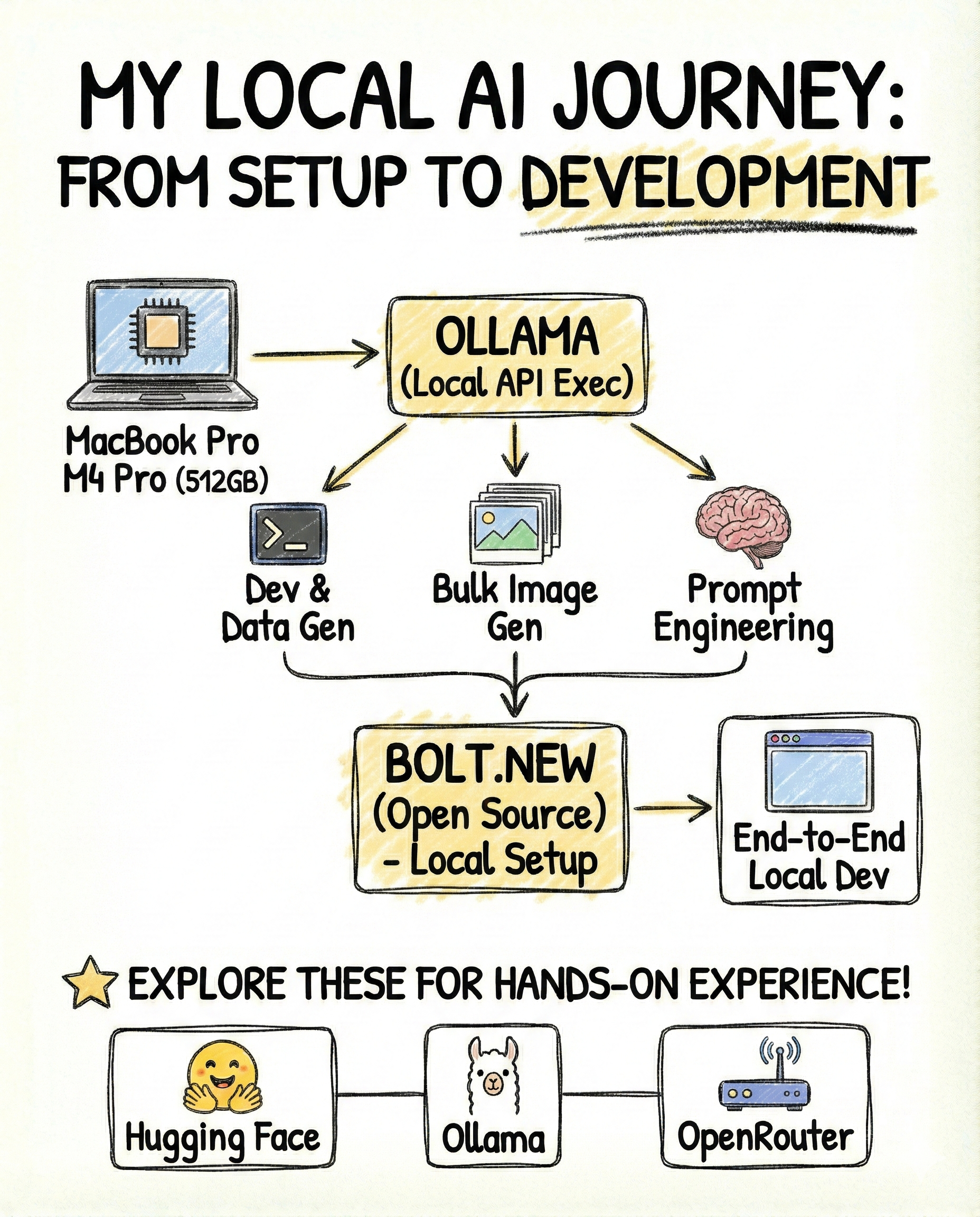

My local setup and how I use it

I run my local stack on a MacBook Pro M4 Pro with 512GB storage.

I started with Ollama as the base layer. Its local inference server exposes a clean API, which I use directly in development for generating structured data and testing prompts.

From there, I used local models for:

- bulk image prompt generation

- prompt engineering and refinement

- offline experimentation without burning API credits

- running Bolt.new’s open-source stack locally for end-to-end AI-driven development

At this point, local AI is not a side tool for me.

It is part of my default workflow.

Builder takeaway

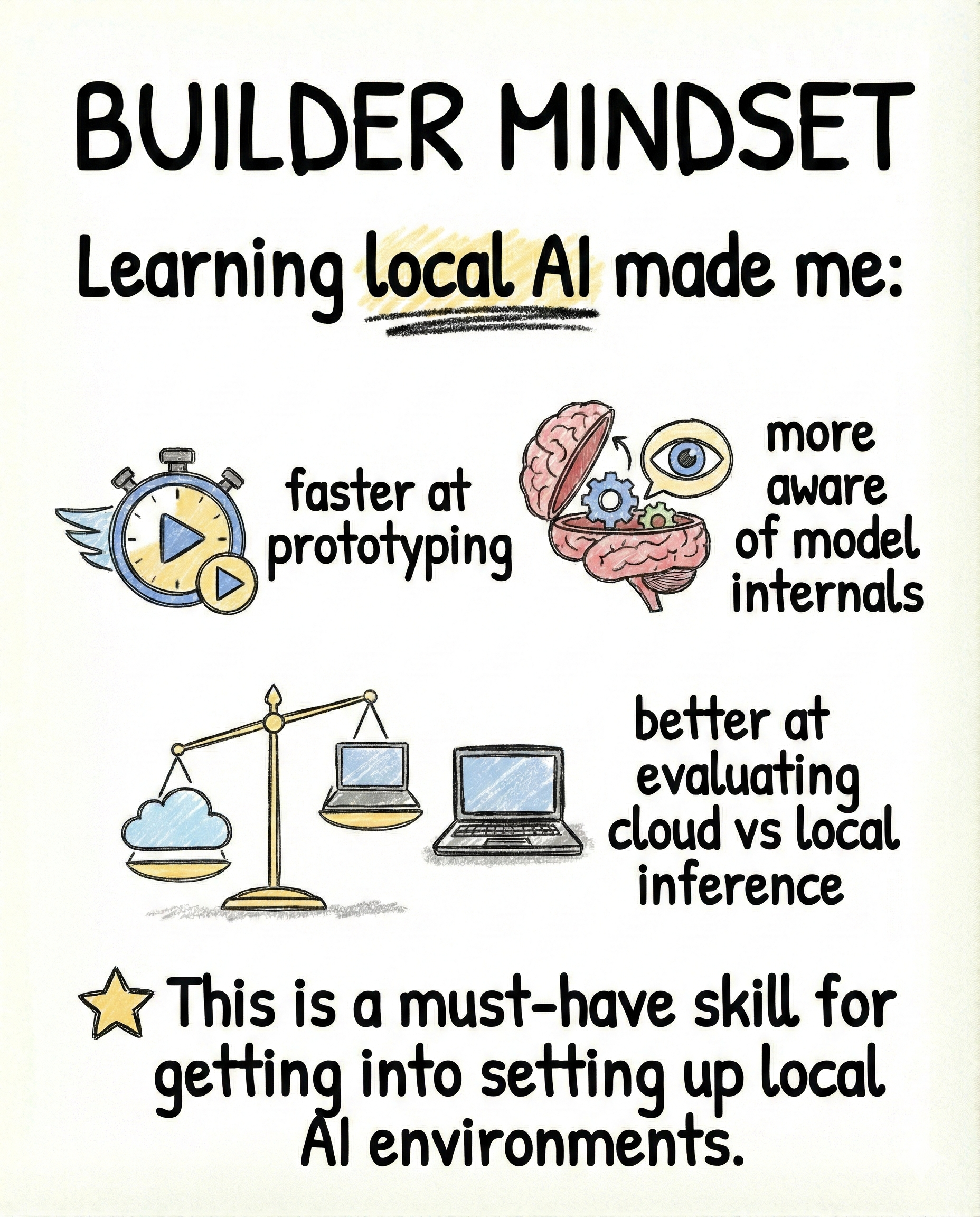

Learning to run models locally makes you:

- faster at prototyping

- more aware of model tradeoffs

- better at choosing between cloud and local inference

- less dependent on external platforms

This is no longer optional knowledge.

For builders in 2025, local AI is table stakes.

Closing

This post is part of InsideTheStack, focused on hands-on AI systems that actually ship.

Follow along for more practical guides.

#InsideTheStack #LocalAI #Ollama